Sharing Data Between Threads in Rust

Rust is renowned for its emphasis on safety, particularly concurrent safety. One of the challenges in concurrent programming is the safe…

Rust is renowned for its emphasis on safety, particularly concurrent safety. One of the challenges in concurrent programming is the safe sharing of data between threads. In this article, we’ll delve into how Rust allows for data sharing amongst threads, ensuring safety and efficiency.

The Problem: Data Races

Before we dive into the solutions, let’s first understand the problem. When multiple threads can access data simultaneously, and at least one of them is writing, you have the potential for a data race. Data races lead to undefined behaviour, which is a situation Rust works hard to prevent.

The Rust Way

In Rust, the type system and the borrow checker are designed to prevent data races. There are two key concepts:

- Ownership: Every value has a single owner.

- Borrowing: Data can be borrowed immutably (multiple borrows allowed) or mutably (only one borrow at a time).

With these principles, Rust ensures that:

- Multiple threads can read data simultaneously.

- Only one thread can write data at a time.

- If one thread is writing, no other thread can read that data.

Sharing Data

1. Arc<T>

The Arc<T> type is an atomic reference counted pointer. When the last reference to an Arc is dropped, the data it points to is also dropped. It allows multiple threads to read shared data simultaneously.

Example:

use std::sync::Arc;

use std::thread;

fn main() {

let numbers = Arc::new(vec![1.0, 2.0, 3.0]);

let handles: Vec<_> = (0..3).map(|i| {

let numbers = Arc::clone(&numbers);

thread::spawn(move || {

println!("{:?}", numbers[i]);

})

}).collect();

for handle in handles {

handle.join().unwrap();

}

}In the above example, Arc::clone creates a new reference to the same data, increasing the reference count.

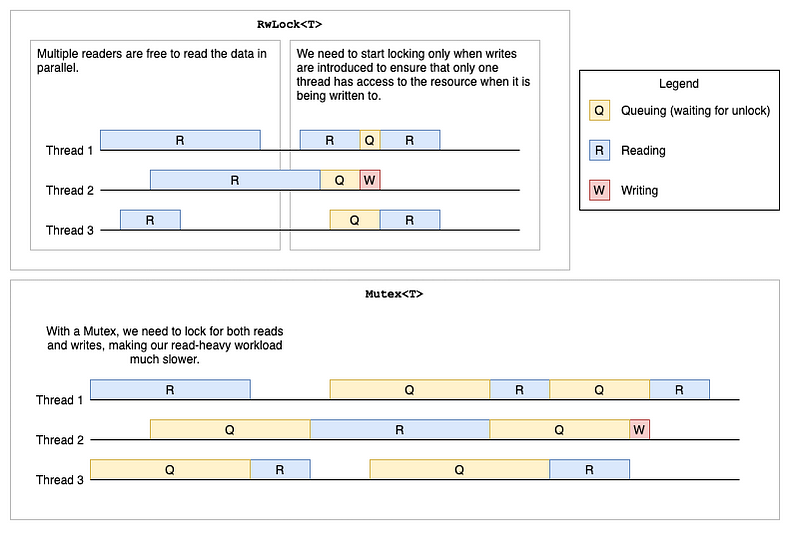

2. Mutex

A mutex (short for “mutual exclusion”) ensures that only one thread can modify the data it protects at a time.

use std::sync::{Mutex, Arc};

use std::thread;

fn main() {

let counter = Arc::new(Mutex::new(0));

let handles: Vec<_> = (0..10).map(|_| {

let counter = Arc::clone(&counter);

thread::spawn(move || {

let mut num = counter.lock().unwrap();

*num += 1;

})

}).collect();

for handle in handles {

handle.join().unwrap();

}

println!("Result: {}", *counter.lock().unwrap());

}Here, each thread increments the counter. The lock method locks the mutex, and if another thread has already locked it, lock it will block the current thread until it can get access.

3. Channels

Channels in Rust are a way to send data between threads. There are two halves to a channel: the sender and the receiver.

use std::sync::mpsc;

use std::thread;

fn main() {

let (tx, rx) = mpsc::channel();

thread::spawn(move || {

let val = String::from("hi");

tx.send(val).unwrap();

});

let received = rx.recv().unwrap();

println!("Got: {}", received);

}In this example, the spawned thread sends a message (a String) to the main thread, which receives and prints it.

The Underlying Principles of Mutex<T> and Arc<T>

Mutex Internals:

A mutex, by design, provides exclusive access to the data it wraps. But how does it guarantee this?

- Locking Mechanism: A thread must first obtain the lock when it wants to access the data. If another thread has already locked the mutex, any subsequent thread trying to obtain the lock will be blocked until the initial thread releases the lock.

- Poisoning: Rust’s mutexes have a safeguard against panics. If a thread panics while holding a mutex, the mutex becomes “poisoned.” Subsequent access to the mutex will result in an

Errvariant, alerting the thread about the previous panic.

Atomic Reference Counting:

Arc<T>, standing for Atomic Reference Counting, is a thread-safe version of Rc<T>. The atomicity ensures that the reference count can be safely incremented or decremented across threads.

- Atomic Operations: Operations on the reference count (like incrementing) are atomic, meaning they’re done in a way that guarantees that other threads can’t see an inconsistent state.

- Memory Ordering: Rust uses a conservative memory ordering for atomic operations to ensure cross-thread visibility of changes.

More on Channels: mpsc and Beyond

Rust’s standard library provides a multiple-producer, single-consumer channel, often abbreviated as mpsc.

- Sync and Async: The standard

mpscis synchronous, meaning senders will block if the buffer is full (or unbuffered). Async variants exist on the broader ecosystem, for instance, in thetokiocrate. - Cloning Senders: You can clone the

Senderpart of a channel to have multiple producers. - Closing Channels: Once all

Senderclones are dropped, the channel is closed. TheyReceivercan detect this by receiving aNonevalue.

RwLock<T>

The RwLock is similar to a Mutex, but allows for more flexibility:

- Multiple Readers: Multiple threads can read the data simultaneously.

- Single Writer: Only one thread can be written at a time.

- Write-precedence: By design, writers are favored, meaning if a writer waits to acquire the lock, it’ll take precedence over readers.

Barrier

A Barrier is a sync primitive that allows multiple threads to synchronize the beginning of some computation.

Unsafe and Atomics

Rust offers low-level atomic operations through the module when the provided abstractions don’t offer the desired performance characteristics. They’re called “atomics” because their operations are indivisible — other threads can’t see an operation at an intermediate state. While powerful, they require careful handling to ensure safety.

External Crates

The Rust ecosystem offers a wealth of concurrency-related crates. Some notable ones include:

crossbeam: Offers data structures and utilities for concurrent and parallel programming.rayon: A data parallelism library that makes it easy to convert sequential computations into parallel.tokioandasync-std: Asynchronous runtimes that provide tools for writing concurrent code using async/await syntax.

Lock-Free Data Structures

- In certain high-concurrency situations, even the overhead of acquiring and releasing locks can become a bottleneck. While more complex, lock-free data structures can offer better performance in these scenarios. In Rust, the

crossbeamcrate offers several lock-free structures, like theEpoch-Basedgarbage collector and lock-free queues.

Scoped Threads in crossbeam

The Rust standard library’s thread spawning doesn’t allow threads to have references to the stack of the spawning thread because they can outlive their parent’s stack frame. The crossbeam crate provides a way to spawn scoped threads, which can safely access the parent thread's stack.

Concurrency vs Parallelism

While they’re related, it’s important to differentiate concurrency from parallelism:

- Concurrency: Handling many things at once, which might or might not be happening simultaneously.

- Parallelism: Doing many things at the same time.

Rust provides tools for both. While the primitives discussed above largely deal with concurrency, tools like rayon are designed for parallelism, focusing on splitting computation across multiple cores.

Check out more articles about Rust in my Rust Programming Library!

Memory Model and Hardware Considerations

While Rust’s abstractions shield developers from many of the intricacies of concurrent programming, underlying hardware and the memory model play a crucial role. Cache coherence, memory reordering, and atomic operations can behave differently across architectures. Rust’s atomic operations, by default, use a strong memory ordering to ensure consistency across platforms. Still, they can be configured for relaxed ordering if needed and if one knows what they’re doing.

Check out more articles about Rust in my Rust Programming Library!

Stay tuned, and happy coding!

Visit my Blog for more articles, news, and software engineering stuff!

Follow me on Medium, LinkedIn, and Twitter.

All the best,

CTO | Tech Lead | Senior Software Engineer | Cloud Solutions Architect | Rust 🦀 | Golang | Java | ML AI & Statistics | Web3 & Blockchain